Have you done a “digital transformation” but find your business fails to get real value from your data? Are you finding new data-related pain points, and growing wary of vendors selling vague buzzwords and overly complex solutions? If so, then this is the checklist for you.

I’ve been building and managing data platforms since before the word “Big Data” was even coined. As the CTO of several companies during my career, I’ve heard many data vendor pitches, and I know when crucial features are missing.

Problem: Complexity

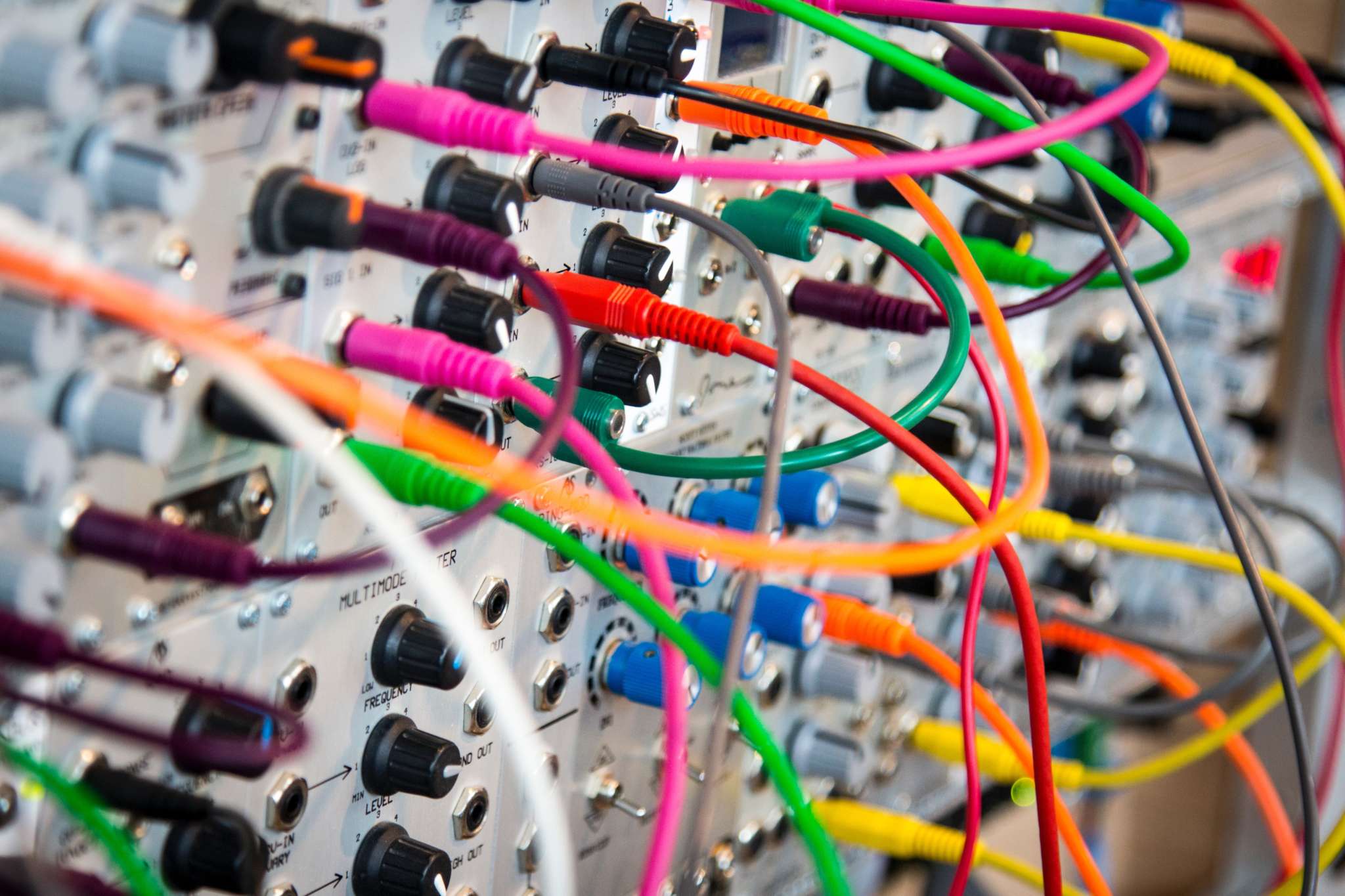

Data is complex. There are many vendors with solutions on how to manage your data and plenty of buzzwords to make sense of data. In order to meet multiple needs, you’ll end up stitching together many technologies and internal processes. It’s easy to get stuck in the weeds of implementation and lose track of the real value and insights that can come from data.

Complexity can result in:

- Untrusted data

- Expensive data management

- Time-consuming “data chores”

- Failure to drive meaningful business value

Solution: Checklist

The solution to complexity is to use a set of guiding principles to bring simplicity to the design of your data platform solution. The best solution we’ve found for ourselves and our clients is to use a checklist.

A checklist is great because it’s easy to evaluate your progress with simple yes or no answers. It is also broadly applicable to a wide range of situations, providing a common “north star”. A checklist helps you move from implementing buzzwords like “big data” and “data lakes” to implementing real solutions.

1. Is your data in one place?

The data that is important to your business comes from many different sources. To really understand your business, your data should be made accessible from a single system. Such a system removes the friction of conducting new data pulls, setting up new credentials, or approving new budget items. You can either copy your data whole into a single system or virtualize the data access layer. Either way, there should be one system through which your data is accessed.

With all of your data in one place, team members are operating off of the same source data. Nothing destroys trust in data faster than having different numbers for the same thing and being unable to find the true value. A single source of truth is important to enable a truly data-driven decision-making process.

2. Is your data self-service?

Can all of your internal consumers access new data from your data platform without making internal “support tickets”? This is the difference between Data Engineering, where technical team members might do daily data pulls, and DataOps Engineering, where engineers manage the automation that does those daily data pulls.

Your data scientists and technical analysts should be able to use SQL or code (Python or R) to directly access data sets. They should be able to find and label their data via a metadata tracking system. From there, they can trace the lineage of how a given data set was created.

Your business managers should be able to load and explore dashboards in a business intelligence tool. It should be easy for them to monitor KPIs, dive deeper into root causes, and set up new dashboards.

3. Is your data checked for validity?

In order for data to be useful, it needs to be trusted. And in order for data to be trusted, it needs to be validated. This doesn’t mean that there will never be problems with your data. It means that there should be a system in place to catch problems before they hit internal data consumers unexpectedly.

Start with regular manual sanity checks. Someone with the right context should ensure that recent totals make sense. They should also check that no unexpected values are coming in (such as mis-named marketing campaigns). And, they should have access to the right technical team members to be able to find the source of any data problems and decide how to resolve them. Set up “data owners” for different sources of data to do these checks and to take responsibility for the resolutions.

Next, apply automation to check for potential problems. Set up automated checks based on the real data quality problems that you find manually, to avoid trying to boil the ocean. Engineers can set up checks in your data pipelines with automated alerts, or make tools to automate parts of the manual checks. Analysts can set up dashboards which query for and highlight data problems. Automation enables you to handle more data flows without also multiplying the time and resources spent on data validation

4. Is your data resilient by design?

Beyond checking for problems, design your data platform to make it easy to resolve or avoid problems to begin with. This is the idea of data resiliency.

If there is a problem with one of your data sources, and data has to be pulled in twice, can that be done without causing problems? If you are pulling in aggregate numbers, like total customer orders, and just adding up a running total, then pulling in data twice could lead to counting them twice. However, if you are pulling in each individual order (event logging), ensuring that you don’t get duplicates with unique ids, then you can sum them up separately. By being careful in how your data represents the world, you can make it resilient against issues like double counting.

If there is a problem with past data and corrections need to be made, there should be a designated place to put such corrections into your data pipeline. This way, problems like incorrect labeling of data, or known data collection problems, can be solved once, instead of repeatedly by whomever is internally consuming data.

If there is a problem caused by a change to a data pipeline, it should be easy to undo that change. If your system requires multiple hours of downtime in order to implement a single schema change, your system is brittle, not resilient.

Lastly the schema of your data should be carefully designed to avoid problems to begin with. Relationships between data sets, even when there is “fuzzy logic” being applied, as with customer identity resolution, should be made explicit in your data.

5. Are you optimized for cost and performance?

Several technological advances in the past few years have greatly reduced data costs and increased query performance. Make sure that whatever solution you pick takes advantage of these modern advances.

Separate compute from storage. The storage layer for your data should be managed with a dedicated storage service, such as AWS S3 or Azure Blob Storage. This means that your expensive compute can be dynamically scaled up to meet additional queries, and then scaled down, potentially to zero, to save costs. It also ensures greater availability for your data, as you are no longer confined to the limits of particular instances.

Insist upon in-memory columnar data querying. This was the killer-feature that let Apache Spark run in seconds the queries that would take Hadoop hours or days. Memory is much faster than disk access, and any modern data platform should be optimized to take advantage of that speed. Also, columnar data storage greatly reduces the amount of memory spent on empty or redundant data.

Use optimized data storage. While data should be queried in-memory, it also needs to be stored. And when stored it should be done in an optimized form. Use a format like Apache Parquet, which uses explicit data types, columnar data layouts, and applies data compression. Where relevant, apply data deduplication to avoid paying for the storage of redundant data.

6. Is your architecture appropriate for your data latency?

All data has a certain latency related to it. That is, you might have a data source which is only refreshed once a day, and so it has a data latency of one day. You might only take action upon data on a weekly basis, in which case there’s no need for data latencies less than a week. In general you will pay more to have lower data latency and can make architectural choices to pay less for longer data latency.

If you require very low latencies of less than a second, then you have streaming data, and you should use dedicated streaming technologies like Apache Kafka. However, if you have data latencies of minutes, then you can use batch processing for simpler and cheaper solutions. If you have data latencies of hours or days, it may make sense to set up serverless infrastructure that scales down to zero when it is not running.

Design the flows of data through your data platform in ways that are appropriate for the data latency of that flow. Just because your batch-based system can mimic streaming through mini-batches does not mean that you should use it for streaming. And just because you have a robust streaming solution does not mean that you should burden it with batch data.

7. Is your data platform easily connectable?

Your data is only as valuable as your ability to make use of it. You will need to connect it to not only the external systems you know of today, but will also need to anticipate future connections.

You should be able to easily connect to new data sources that are relevant to your business. Choose technologies where connecting to most new SaaS data sources or data stores is just a matter of configuration, not something that requires custom integration. But also leave open the option to do custom integrations where needed.

You should also be able to easily connect to systems that use your data, including business intelligence dashboards, financial reporting, marketing automation, and external analytics vendors. In particular, there is a growing ecosystem of data science vendors like Retina that can add important predictive analytics to your data.

Conclusion

At Retina, we are heavy users of data, and this checklist has guided our own technology choices. We’ve also advised some of our clients on making strategic technology choices, with the points in this checklist as a guide.

Hopefully you find this checklist useful as well. Bookmark it and share it with your own teams to ensure that, even if you don’t check all the items today, you have a list of improvements to implement next.